| Text Corpora | ||

|---|---|---|

| Chapter 6. Types of resources |  |

This section addresses corpus linguists and corpus technologists who would either like to provide existing text corpora to the CLARIN-D infrastructure or want to develop new corpora for the CLARIN-D infrastructure. The central part of this section explains the recommended format for CLARIN-D compliant text corpora on two levels, text data and metadata, and motivates the reasons for this decision. In addition, a background chapter introduces to corpus linguists or other scholars interested in corpora how such resources are addressed by the CLARIN-D-infrastructure.

Why contribute to the CLARIN-D infrastructure? Users, who provide the CLARIN-D-infrastructure with CLARIN-D compliant text corpora, can benefit from the following features:

CLARIN-D compliant metadata can be harvested and are therefore searchable throughout the CLARIN-D infrastructure. The benefit is: your data are more visible and easier to find for other researchers and therefore more widely used. See Chapter 2, Metadata for more details.

CLARIN-D compliant texts that are provided with sufficient intellectual property rights (IPR) can be stored and maintained in the repositories of CLARIN-D service centers. The benefit is: CLARIN-D handles the distribution and access to your data while respecting their particular IPR situation.

CLARIN-D compliant corpora (i.e. their text structure) are interoperable in the CLARIN-D infrastructure; i.e. they are fully searchable, several views (HTML, TEI, text) can be generated automatically at the CLARIN-D service centers, and third those corpora can be further processed by the CLARIN-D tool chains (see Chapter 8, Web services: Accessing and using linguistic tools). The benefit is: you are able to receive support for the (further) linguistic analysis of your data, including a ready to use box of tools. You can mix and merge your data more easily with data which are provided by someone else.

Readers who are familiar with corpus typology, corpus compilation, and corpus quality can skip this section and go directly to the section called “Text format”. The following section gives a brief overview and is not intended to replace introductory works like [McEnery/Wilson 2001] for English and [Lemnitzer/Zinsmeister 2010] and [Perkuhn et al. 2012] for German. We also recommend to take a look at the Handbook of linguistics and communication science, vol. 2, Corpus linguistics [Lüdeling/Kytö 2009].

A common characterization of the term corpus is given by John Sinclair, a pioneer of corpus linguistics: “A corpus is a collection of naturally-occurring language text, chosen to characterize a state or variety of a language” [Sinclair 1991, page 171]. The terms corpus, text corpus and electronic (text) corpus are used interchangeably in this section to refer to a collection of texts in machine-readable form.

Corpora of written text consist of several corpus items (for a discussion about the size of a corpus see [Sinclair 2005]). Each corpus item consists either of text samples (such as the Bonner Frühneuhochdeutschkorpus ) or an entire document (such as most of the corpora of the IDS, the DWDS-Kernkorpus or the Deutsches Textarchiv (DTA, German Text Archive), in a machine-readable form, annotated on various linguistic levels and enhanced with metadata (see Chapter 2, Metadata). The primary data is a transcription of the raw data (or source data, e.g. when the raw data is a non-digital asset, such as a book). These primary data can be annotated on different linguistic levels (see Chapter 3, Resource annotations for a detailed discussion about annotations and Chapter 1, Concepts and data categories for an account on data categories) resulting in a set of related files. Usually each single annotation layer of the primary data results in a new XML instance which is stored in a separate but related file. Apart from the annotation files, metadata files have to be included as well.

One commonly distinguishes the following types of text corpora with regard to data collection and language modeling:

balanced reference corpora, i.e. text collections that are balanced over text genres such as the British National Corpus (BNC) or the DWDS-Kernkorpus [Geyken 2007],

opportunistic corpora, and corpus archives that are designed to act as primordial samples for user defined virtual corpora that are balanced or representative with respect to the intended basic population such as the Deutsches Referenzkorpus (DeReKo, [Kupietz et al. 2010]),

closed corpora, i.e. text collections that provide the entirety of texts from a former language period or a single author, if this amount is comparatively small and strictly limited such as for the Old German texts in Deutsch Diachron Digital (DDD)).

open corpora, i.e. text collections corresponding to well documented subsets of text taken from the period of time or discourse. As opposed to closed corpora open corpora are compiled if the amount of text for a certain period of time or for a certain discourse is too large to be presented in its entirety.

specialized corpora, i.e. text collections containing a specific linguistic variety or sublanguage (e.g. a discourse, a certain text type) whereas general corpora aim at providing a representative section of the entire language material of a specific period of time (e.g. Old/Middle/Early New/New High German).

One may also find the terms synchronic and diachronic corpora: synchronic corpora represent the language used at a specific point in time, whereas diachronic corpora document the evolution of language over a certain period of time.

This section addresses the question of data compilation and distinguishes between capturing data from non-digital and from digital sources.

The sources on which the recognition of corpus texts is based may differ. In some cases, such text recognition sources are the original works (printed works or manuscripts) themselves. Nevertheless, for practical reasons, transcriptions will generally be based on some kind of copy from the original whereas the original sources are only consulted in case of doubt. Copies may be paper copies, microfiches or microfilms. In most cases, however, they will be available in a digital format. The best case scenario (when dealing with copies of text sources) would be to base the transcription on a coloured image with high resolution (scan or photography) taken directly from the original, which should be available in a lossless image format (e.g. TIFF). The image quality and therefore the text recognition accuracy decreases the less of the named conditions apply, e.g.

in case the source images are grey or bitonal scans,

in case the source images are based on microfilms or microfiches, or,

in case of insufficient image resolution of the source images.

High quality transcriptions of the text are a necessary prerequisite for the correctness and completeness of retrieval results. There are different methods of text recognition:

With this method texts are recognized by optical character recognition software. While OCR may be suitable for modern texts in Antiqua performance decreases considerably with the increasing age of the text sources. Therefore, texts have to be proofread and manually corrected several times in order to eliminate recognition errors. The performance of the OCR may also be influenced positively by preparing the scanned images in advance, e.g. by labeling zones on a page with information about whether they contain text or images, whether text is written in columns etc.

In addition, OCR software may be trained for a particular kind of text source (e.g. sources printed at the same time and by the same printer). With training, OCR performance may be increased considerably. However, since training is quite costly, it is only practicable if there is a considerable amount of homogeneous corpus texts to be transcribed. It is too time consuming if the texts are of varying typefaces and structures [Tanner et al. 2009], [Nartker et al. 2003], [Furrer/Volk 2011], [Holley 2009].

Manual transcription by one person with several proofreading run-throughs: With this method high accuracy rates may be achieved, especially if there are different correctors who are trained to work with historical texts. However, this method is time consuming, as texts have to be transcribed very carefully and corrected several times, in order to get good accuracy rates.

The double keying method requires two typists transcribing the same text, thus producing two independent versions of a text transcription. These two versions are then compared to one another and differences are evaluated by a third person. Given, that two typists are unlikely to make the same mistakes, this method is highly accurate even for older historical texts. However, this transcription method is quite costly as well, since two versions of a text have to be produced. Training of the typists might improve the transcription accuracy especially for older texts with difficult typefaces [Haaf et al. forthcoming].

For text recognition, guidelines should be defined to regulate how to deal with special phenomena within the source text, where to apply normalizations or, in which cases of doubt to follow the source text. The text recognition guidelines of the DTA project (in German) are a good example.

Written language data may already be digitized and come in different formats, e.g. as plain text, office software documents or in PDF files. If so, text data has to be converted into a common standardised format, which allows for further processing.

Spoken language lies in the intersection of written corpora and multimodal corpora since their transcriptions are provided in a written form whereas their underlying data correspond to digital audio or video recordings. For more technical details see the section called “Multimodal corpora”.

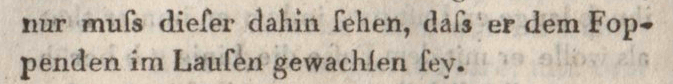

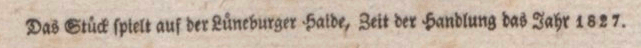

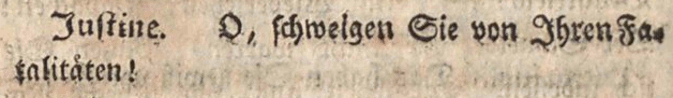

Corpus quality can be assessed on two levels: transcription quality and correctness of annotations. Both should be dealt with in the data caption phase rather than in the correction phase. One example for a problem that might arise during data capturing are similar letters, where each interpretation leads to a correct word. This is a typical error that is very hard to detect after the transcription phase. Some instances of common errors of this class are shown in Figure 6.1, “Transcription errors leading to valid word forms”.

|

“Lauſen” (to delouse) vs. “Laufen” (to run), in: Johann Christoph Friedrich Guts Muths: Spiele zur Übung und Erholung des Körpers und Geistes. Schnepfenthal, 1796, IMG 270

|

“Haide” (heath) vs. “Halde” (dump), in: August von Platen: Der romantische Ödipus Stuttgart u. a., 1829, IMG 10

|

“schweigen” (to remain silent) vs. “schwelgen” (to wallow), in: Friedrich Wilhelm Gotter: Die Erbschleicher. Leipzig, 1789, IMG 78

Similarly, misinterpretations on the annotation level are possible.

The Deutsches Textarchiv provides a detailed workflow of how to deal with possible annotation problems in the pre-caption phase and of how detailed transcription guidelines can help to avoid transcription errors.

For more detailed information on quality control issues consult Chapter 5, Quality assurance .

TEI-P5 (see Excursus: Text Encoding Initiative – TEI-P5) is the recommended standard to be used for

CLARIN-D. TEI-P5 is a widely used and very flexible standard that is adoptable for a

large variety of text types. Due to this flexibility of the “full” TEI-P5 tag set

(tei_all)

TEI-P5-compliant corpora are generally not interoperable per se. For a discussion of some of

the problems arising from this fact see [Unsworth 2011] and [Geyken et al. 2012].

![[Tip]](../images/nav/tip.svg) | Excursus: Text Encoding Initiative – TEI-P5 |

|---|---|

For the semantic structuring of texts the TEI, a consortium of international scholars and researchers founded in 1994 , provides a modular, theory-neutral annotation tag set accompanied by an elaborated description. The TEI guidelines are freely available and there is a wide range of tools (both open source and commercial) that can process TEI-annotated data. All these benefits make the TEI a good starting point for annotating linguistic corpora. P5, the current version of the TEI guidelines, was officially released in 2007. The TEI offers solutions for the structuring of texts, considering as many demands of digital editions as possible. Corresponding to the purpose of XML the TEI encoding standard focuses on the semantic rather than the formal structuring of texts. Text structuring may remain on a rather superficial level or may take place on a deeper text level and even contain the addition of editorial information as comments or text critical remarks. The TEI P5 tag set consists of a limited number of elements, each of which encompasses a selection of specific attributes. On the level of attribute values only some recommendations are given. Apart from that users are free to use value names, which they consider to be the most suitable in their context. This way, the application of the TEI tagset results in a broad variety of possible

annotations, from which users may chose the most suitable ones for the annotation of

the different and specific phenomena, which occur in the texts they are dealing

with. However, this desirably wide range of annotation solutions leads to the

problem, that for the treatment of one phenomenon different annotation approaches

(each of which is accordant to the TEI) are possible, depending e.g. on the degree

of specificity of the annotation. For instance it is possible to tag the name of a

person with either the element For this purpose it is possible to adjust the TEI schema to the needs of a specific project. The easiest way to do so is to create an ODD (short for: “one document does it all”) document, in which the designated adjustments to the TEI schema are specified. ODD is a special TEI P5 format which allows for customizations of the TEI schema, such as excluding modules, elements, or attributes (element or class wise) from the schema, or defining particular attribute values. It is also possible to add new elements to a given tagset. For the creation of a specific ODD file the web application Roma may be used. Roma helps the user to define the project's specifications step by step. The resulting ODD file may be saved and, if necessary, modified further according to chapter 22 of the TEI guidelines. Based on the final ODD file a schema (RelaxNG, RelaxNG compact, XML Schema etc.) may then be generated using Roma. The TEI provides a tutorial on how to use Roma. Note, that the TEI itself offers several specifications of the TEI guidelines as recommendations for specific annotation purposes, due to the fact that there are prototypical phenomena which should be dealt with in a common standard way, such as TEI-Tite, TEI-Lite or the Best practices for TEI in Libraries. For the annotation of German texts which were originally published in print the DTABf offers a TEI specification, that is suitable for the different kinds of text structures found in printed texts. |

Among the partners of CLARIN-D there are two formats that are widely used for a very large number of documents: the DTA base format (DTABf) at the BBAW and IDS-XCES at the IDS. A TEI P5 conformant document grammar for IDS-XCES, called I5, is currently under development [Lüngen/Sperberg-McQueen 2012]. These formats are very likely to be supported in the future since software used by many thousand scholars is tailored to them. However, both partners intend to unify the formats in the coming months in such a way that a common TEI-P5 compliant format can be provided for CLARIN-D. In the meantime the CLARIN-D policy recommends both of the two different but TEI-P5 compliant schemas with less variation but more semantic control. They are discussed in more detail below focussing especially on the requirements and benefits for the integration into the CLARIN-D infrastructure.

As a consequence of the remarks made above, there are two main levels of compatibility with the CLARIN-D infrastructure:

Text corpora that are compliant with “full” TEI-P5: Those corpora will be interoperable with other resources in the CLARIN-D infrastructure on four sub-levels:

CLARIN-D compliant Metadata (CMDI profiles) can be generated from TEI-P5 headers (even though they might be underspecified).

Corpora can be sorted in the repository of the CLARIN service centers of either BBAW or IDS.

Corpora formats can be transformed into the TCF format (see the section called “Interoperability and the Text Corpus Format”), hence postprocessing of these documents in any of the web service based CLARIN-D processing pipelines (Weblicht) is possible.

Texts in TEI-P5 are searchable via the federated search component of CLARIN-D.

Text corpora that are fully compatible with one of the TEI-P5 subsets of CLARIN-D, namely DTABf or IDS-XCES: In addition to the four levels of interoperability above, documents in these formats share additional benefits with regard to the CLARIN-D infrastructure. See the DTA base format (DTABf) and IDS-XCES technical information in the boxes below for details and a discussion on how deviating TEI-P5 schemas can be integrated into the recommended schemas.

![[Important]](../images/nav/important.svg) | DTA base format (DTABf) |

|---|---|

The DTA base format draws on the works of the Deutsches Textarchiv (DTA) of the BBAW where a large reference corpus for a large variety of (kinds of) texts is currently compiled. The tagset of DTABf is completely compliant to the TEI P5 guidelines, i.e. no new elements or attributes were added to the TEI P5 tagset. It consists of about 80 TEI P5 elements needed for the basic formal and semantic structuring of the DTA reference corpus, plus another 25 additional elements used for metadata structuring in the TEI header. The purpose of DTABf is to gain coherence on the annotation level (i.e. similar structural phenomena should be annotated similarly), given the heterogeneity of text material as published in the DTA over time (1650–1900) and text types (fiction, functional, and scientific texts). DTABf attempts to meet the criteria of interoperability mentioned by Unsworth in that it “focuses on non-controversial structural aspects of the text and on establishing a high quality transcription of that text” [Unsworth 2011]. Therefore, the goal of DTABf format is to provide as much expressiveness as necessary by being as precise as possible. For example DTABf is restrictive not only considering the selection of TEI elements but also with respect to attribute-value pairs and allows only a limited set of values for a given attribute. Unlike initiatives such as TEI-A [Pytlik Zillig 2009] the goal of DTABf is not to build a schema that validates as many cross collections as possible but to convert resources from other corpora so as to keep the structural variation as small as possible. The necessity of a common standardized format for the annotation of printed texts seem to be opposed to the fact, that different projects usually have different needs as for how a corpus may be exploited. Therefore annotation practices vary according to the variable queries on a certain corpus. This problem may be addressed by defining different levels of text annotation, that represent different text structuring depths. The TEI recommendations for the encoding of language corpora foresee four different levels of annotation defining required, recommended, optional, and proscribed elements. DTABf consists of such annotation levels, which serve as classes subsuming and by that categorizing all available DTABf elements:

See the tabular overview

of DTABf DTABf is continuously adapted to the requirements of external corpus projects. These extensions are mainly community-based. Up to now more than ten external projects have contributed to the extension of DTABf. For a range of projects, their TEI based schemas were converted to DTABf, including:

In addition, a CLARIN-D corpus curation project by the F-AG 1 (“Deutsche Philologie”, German philology) with the project partners BBAW (coordinator), HAB, IDS, and University of Gießen pursues the goal of converting external corpora, which were created using many different formats, into DTABf and integrating those corpora into the CLARIN-D infrastructure. This project started in September 2012. It will be extensively documented and might serve as an example of good practice for your own work. |

![[Important]](../images/nav/important.svg) | IDS-XCES |

|---|---|

IDS-XCES is an adaptation of the XCES corpus encoding standard (see [Ide et al. 2000]). It has been the format for the semantic and structural annotation of the texts in the corpus archive of the German Reference Corpus DEREKO at the Institute for the German Language in Mannheim (IDS) since 2006. The XCES standard had been introduced in 2000 as a re-definition of the SGML-based corpus encoding standard CES [Ide 1998] in XML. CES, in turn, had been based on the likewise SGML-based P3 version of the TEI guidelines as an effort to define a subset of TEI P3 elements and attributes suitable for corpus annotation. Subsequently, XCES had not been kept fully compatible with the XML-based successors of P3, i.e. TEI P4 and P5, so that as of today, it contains a few elements and attributes not in the TEI P5 guidelines. IDS-XCES is a modified version of XCES, in which some content models of XCES have been redefined and some new elements and attributes have been introduced according to the needs of the DEREKO corpus descriptions. In doing so, the additional attributes and elements and revised content models have been drawn mostly from the TEI P4 and P5 guidelines so that as a result, IDS-XCES is to a large extent compatible with TEI-P5. Currently, a TEI P5-conformat document grammar for IDS-XCES, dubbed I5, is being customized, which will be applicable to the existing IDS-XCES annotated corpora without the need to convert them [Lüngen/Sperberg-McQueen 2012]. IDS-XCES comprises 167 elements and numerous attributes to encode metadata, in particular all bibliographic metadata, and the features of the corpus and text structure, including hierarchical structure. One major principle of IDS-XCES is the tripartite structuring of an archive into corpus, document, and text. In DEREKO, a corpus for instance comprises all editions of one year of a daily newspaper and contains a number of documents in the form of the daily editions. A document, in turn, contains a number of texts, which in the case of newspapers are the singles articles, commentaries and the like. In IDS-XCES, corpora with texts of all text types are structured according to this basic scheme. IDS-XCES is also designed to encode the basic sentence segmentation. Elements for further linguistic annotations such as part-of-speech tagging, however, are not incorporated in IDS-XCES. Instead, they should be provided as XML standoff annotations pointing into the texts marked up in IDS-XCES. This allows for the provision of any number of concurring linguistic annotations. IDS-XCES serves as the internal representation format for the corpus access platform COSMAS II at the IDS. This means that texts marked up according to IDS-XCES can be integrated in COSMAS II and thus be queried and analysed via its web interface. Moreover, all annotation features of IDS-XCES can potentially be exploited to define virtual subcorpora, as within DEREKO’s primordial sample design [Kupietz et al. 2010]. The DEREKO corpus archive is used by linguistic researchers at the IDS and institutions around the world via COSMAS II. With over 5 billion word tokens, it is the largest archive of contemporary written German. It contains newspaper articles, scientific texts, fiction, and a wide variety of other text types. |

Text corpora compliant to either DTABf or IDS-XCES gain the following benefits:

Texts can be stored in the repositories of the CLARIN-D service centres.

Texts are searchable via the search engines and the federated content search interface.

Texts can be made available through corpus analysis software like COSMAS II (IDS) or DDC (BBAW).

(HTML, TEI, text) views for web presentation can be generated automatically at the CLARIN-D service centres.

Texts can be converted automatically into TCF (see the section called “Interoperability and the Text Corpus Format”), the entry point for the CLARIN-D toolchains (see Chapter 8, Web services: Accessing and using linguistic tools). These conversion routines will be available by the end of 2012.

Metadata compliant to the CMDI metadata format can be provided (see Chapter 2, Metadata).

A full list of the commonalities and differences between IDS-XCES and DTABf as well as steps towards their unification is in preparation and will be made publicly available after completion.

There are types of corpora which are not covered by the TEI schemas described above, cases where even the TEI guidelines do not suffice, namely texts of computer-mediated communication (CMC). CMC is a genre which falls in between written and spoken communication. Some features of it are not yet expressed appropriately in the TEI guidelines. This concerns the macrostructural level of the “documents” (i.e. threads and logfiles) as well as microstructural elements (e.g. emoticons and addressing terms). In such a case we suggest extensions and modifications to the TEI guidelines. See [Beißwenger et al. 2012] for a detailed account of CMC spefic TEI customization.

The recommended metadata format is CMDI. Data in CMDI format can be directly made available through the CLARIN-D infrastructure. For a detailed description of the CMDI framework see Chapter 2, Metadata.

Editing tools provided within CLARIN help with the specification of CMDI-formats that are suitable for the necessities of certain projects. However, to reduce the effort of preparing new CMDI formats for individual projects, it is planned to provide services that help with the provision of CMDI metadata for texts encoded according to DTABf or IDS-XCES. Future plans foresee the provision of a web form within CLARIN-D, where metadata can be recorded and subsequently exported in a structured way according to the DTABf and IDS-XCES metadata headers, or CMDI. In addition, conversion routines will be provided, which allow for the conversion of DTABf and IDS-XCES metadata into CMDI.

For a suitable integration into the CLARIN-D infrastructure text corpora should be provided with an explicit statement of intellectual property rights and terms and restrictions of usage. This is typically documented through a license. See Chapter 4, Access to resources and tools – technical and legal issues for more information on legal aspects in corpus distribution.

The annotation of the text structure should be based on the P5 guidelines of the TEI. The recommended text corpus format is the unified document scheme currently developed within CLARIN-D. Until the finalization of this annotation scheme we recommend to use either DTABf or IDS-XCES. For both original formats lossless converters will be supplied.

The use of any of these three formats assures the interoperability of corpus texts on several levels: full text search, web display of the texts and the possibility to process the texts by the CLARIN-D tool chain. Some examples of corpora already integrated into the CLARIN-D infrastructure can be found on the DTA website.